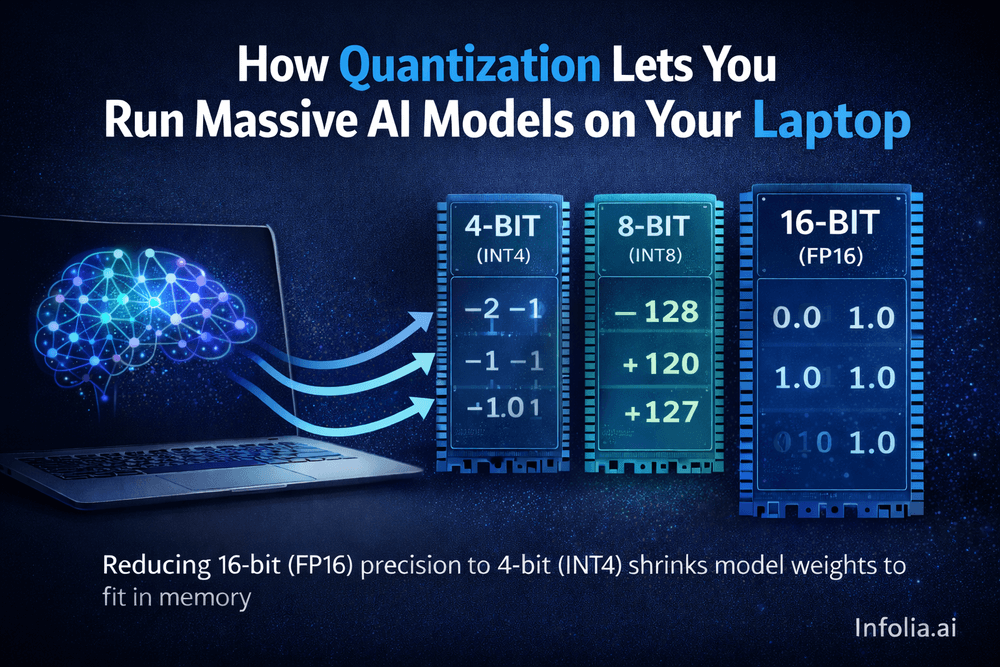

Model Quantization: How a 70B Model Fits on Your Laptop

Learn how quantization shrinks AI models by 2-4x with minimal quality loss, and why it matters for cost and deployment.

Read issue

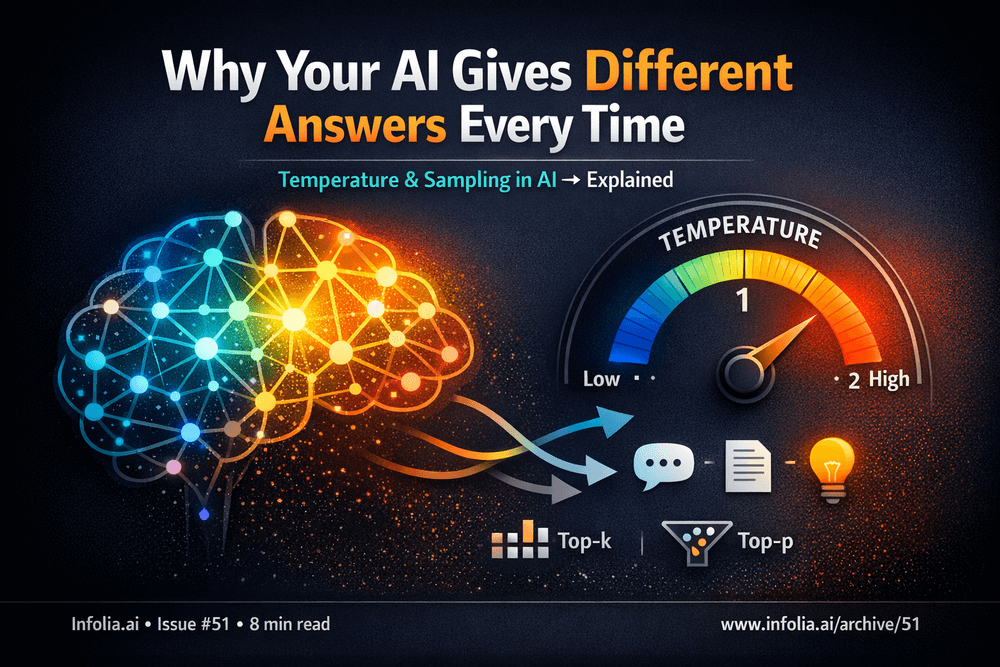

Temperature & Sampling: Why Your AI Gives Different Answers Every Time

Learn how temperature, top-p, and sampling settings control AI output randomness and when to use each.

Read issue

Tokens & Context Windows: Why ChatGPT Has Limits

Tokens & Context Windows Explained

Read issue

Three Ways to Customize LLMs: Prompting, RAG, and Fine-Tuning

Three approaches to customize LLMs: implementation guide with code examples.

Read issue

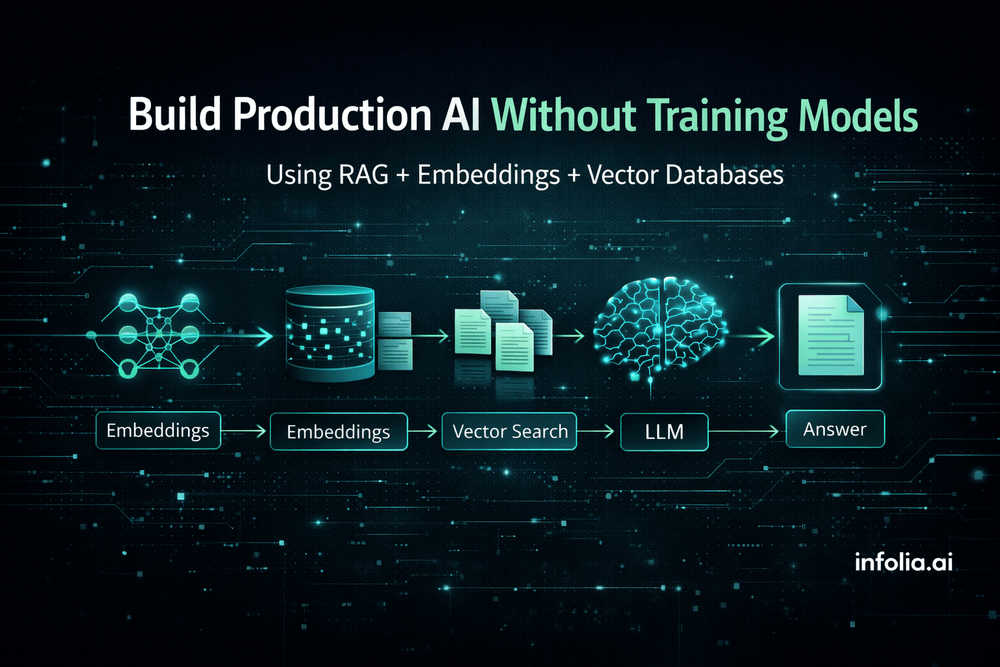

What is RAG? Building Production AI Without Training Models

Production AI without model training: How RAG works with code examples.

Read issue

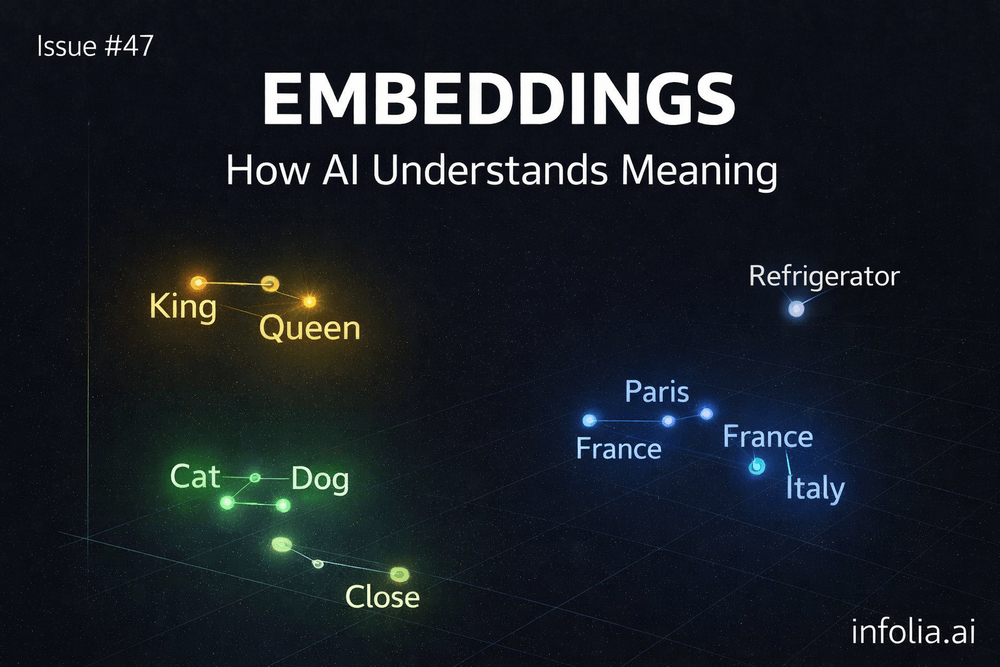

Embeddings & Vector Spaces: How AI Understands Meaning

How AI turns words into meaning using vectors

Read issue

Transformers: The Architecture That Changed Everything

The architecture behind ChatGPT, Claude, and every major AI breakthrough - explained simply.

Read issue

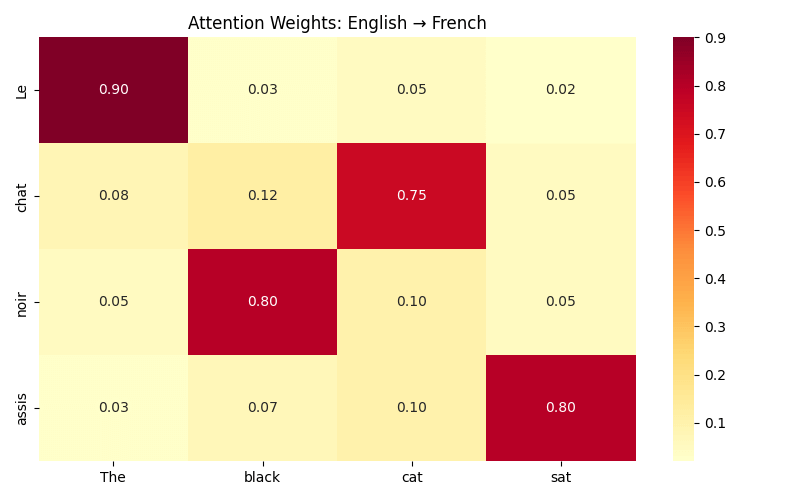

Attention Mechanisms: Teaching Neural Networks Where to Look

Weighted embeddings and why attention replaced RNNs for modern AI.

Read issue

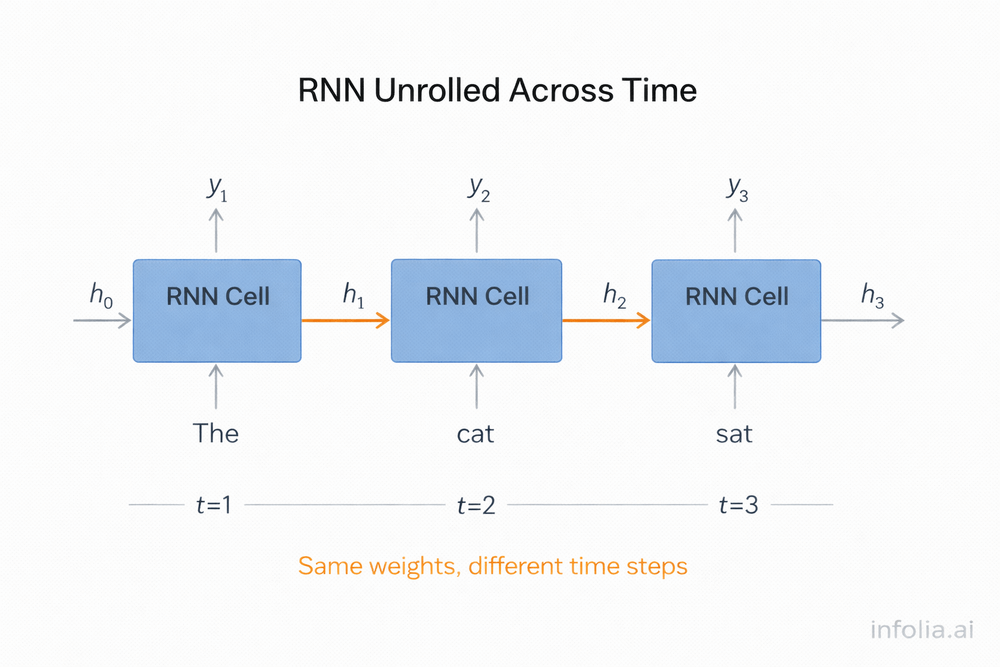

Recurrent Neural Networks: Processing Sequences and Time

How RNNs process sequences through hidden state loops and LSTM gates.

Read issue

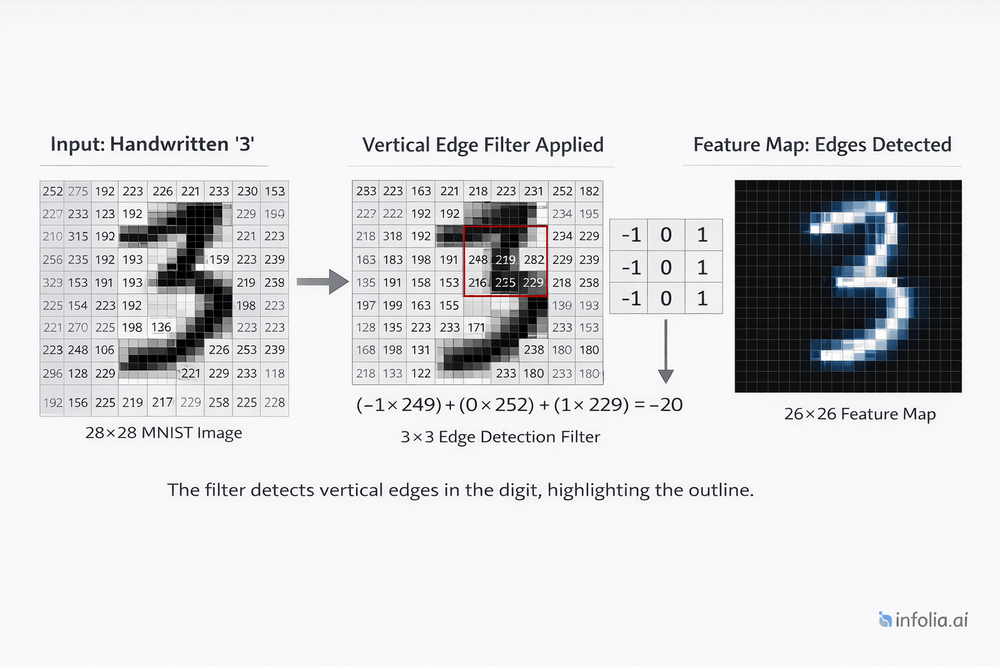

Convolutional Neural Networks: How AI Sees Images

From edge detection to face recognition: How CNNs use sliding filters and parameter sharing to understand images with 1000x fewer parameters.

Read issue

AI in 2026: The 'Show Me the Money' Year

Week 1 of 2026: ROI pressure, quantum bets, transformer plateau, and physical AI

Read issue

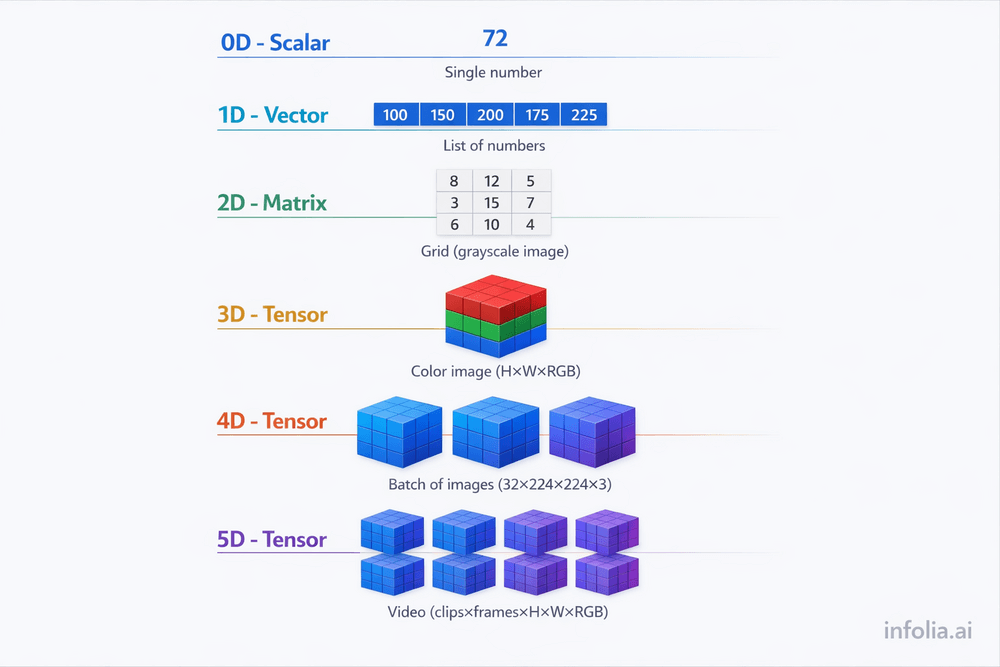

What Are Tensors? (And Why Modern AI Needs Them)

The multi-dimensional data structures that power modern AI architectures.

Read issue

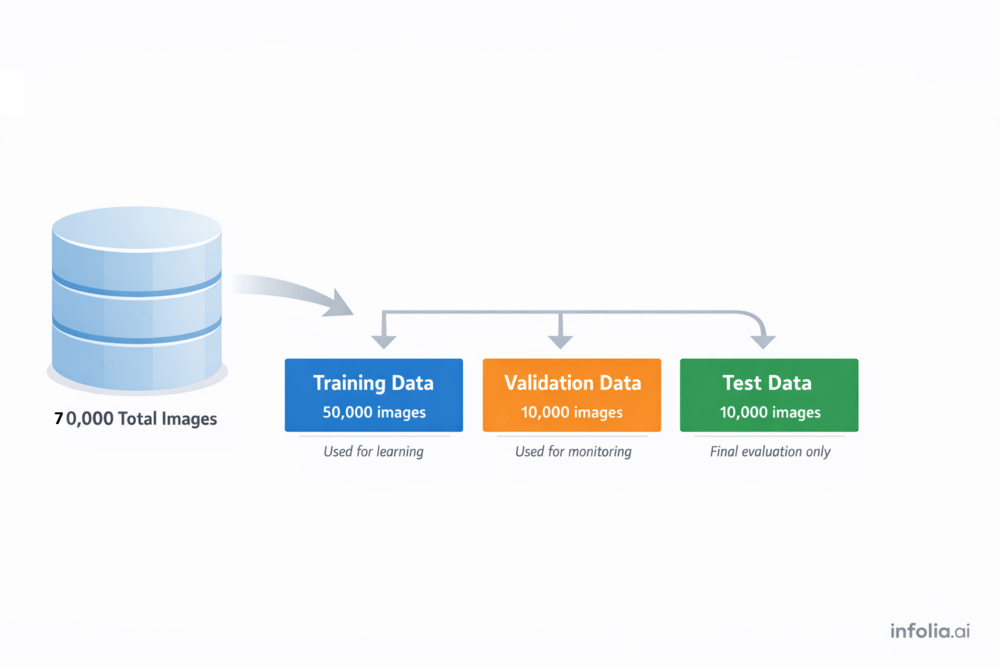

Training Neural Networks: The Complete Learning Loop

The complete 7-step training loop: epochs, batches, overfitting, and when to stop.

Read issue

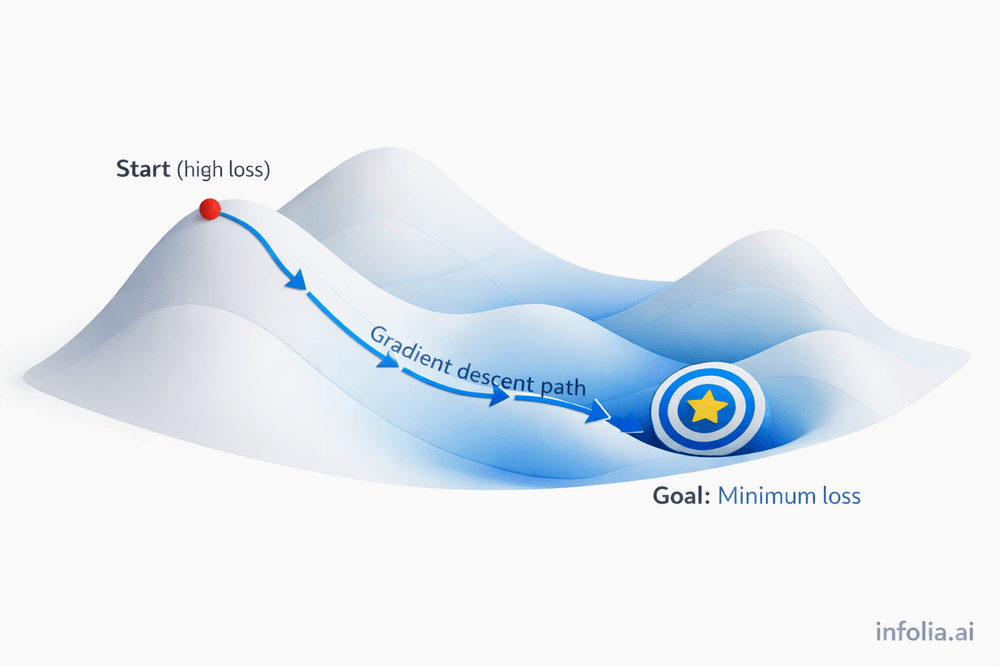

How Neural Networks Actually Learn (Gradient Descent)

What gradient descent is, how it uses gradients to update weights, and why it's the optimization algorithm that makes neural network learning possible

Read issue